curiosity

researching creative applications of technology

ANTARCTICA

On my Antarctic Fellowship with the Antarctic Division I was engaged with a lot of the research projects conducted aboard the Aurora Australis and on the stations. Portraying this research through an artistic lens is a great challenge.

I’m inspired by the concept Hideaki Ogawa terms “Artistic Journalism”. How do artists address serious issues such as climate change? Communication is one means. Artists can go further, offering understanding and solutions through artistic experiences.

CREATIVE ROBOTS

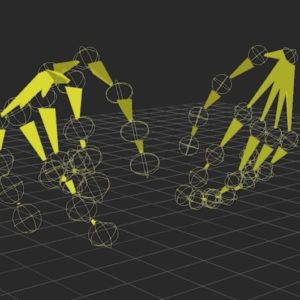

Robots are becoming a greater part of our social and cultural lives and may one day be as pravalent in theses settings as in inustry and manufacturing.

In order to co-habit and co-create with robots we need to see beyond their utility and learn to collaborate and move together. We need to learn to be comfortable in each other’s presence. Human learning and machine learning are interwoven in this future.

SURGERY ROBOTS

I’ve been working with surgeon Chrys Hensman, researchers Mats Isaksson and Oren Tirosh and PhD stuudent Jaime Hislop investigating the impact of Robot Assisted Laparoscopic Surgery (RALS) vs Traditional Laparoscopic Surgery (TLS)

We have focussed on outcomes for surgeons and the impacts on surgeon’s wellbeing and career longevity.

VR FOR TRAINING

Safety at Work: an applied research project to integrate immersive experiential learning with positive behaviour support training in the disability sector

Our team developed a VR training application to help disability support workers gain experience with positive behaviour support methods in a safe environment, prior to contact with clients in their homes.